Was this forwarded to you by a friend? Sign up, and get your own copy of the news that matters sent to your inbox every week. Sign up for the On EdTech newsletter. Interested in additional analysis? Upgrade to the On EdTech+ newsletter.

The new federal accountability push behind OBBB’s institutional accountability treats post-graduation earnings as a straightforward proxy for program value. Hit the threshold or lose access to student loans. And we are about to use earnings data to judge colleges at scale later this year.

But three recent Ithaka S&R reports make a deeper point: earnings are not a clean institutional output. They are the joint product of labor markets, geography, occupational pathways, and time. When we treat them as a direct measure of college quality, we misattribute cause and distort institutional behavior in ways that ultimately hurt the students the policy is meant to protect.

Phil has been doing some of the clearest work unpacking these measures, but I have been uncomfortable with additional, deeper problems. It is not just a question of a coarse metric, it is a question of causality. These measures attribute earnings to institutions and programs when those earnings are in fact shaped by labor markets, occupational structures, career pathways, and time. Earnings are not a simple institutional output. They are the product of systems.

The Ithaka research focuses on South Carolina, but the dynamics it reveals—how earnings are shaped by labor markets, program structure, and pathways—are not unique to that state.

What the Ithaka reports reveal

The three Ithaka reports make this breakdown in attribution visible in several ways. They show how earnings vary across labor markets, how they differ within and across fields and programs, and how they reflect the pathways graduates follow into the workforce. Across each of these dimensions, one point becomes clear: earnings are not a single, stable outcome. They are shaped by context, structure, and time.

Let’s look deeper at the four dimensions of interest.

1) Geography is doing more work than we admit

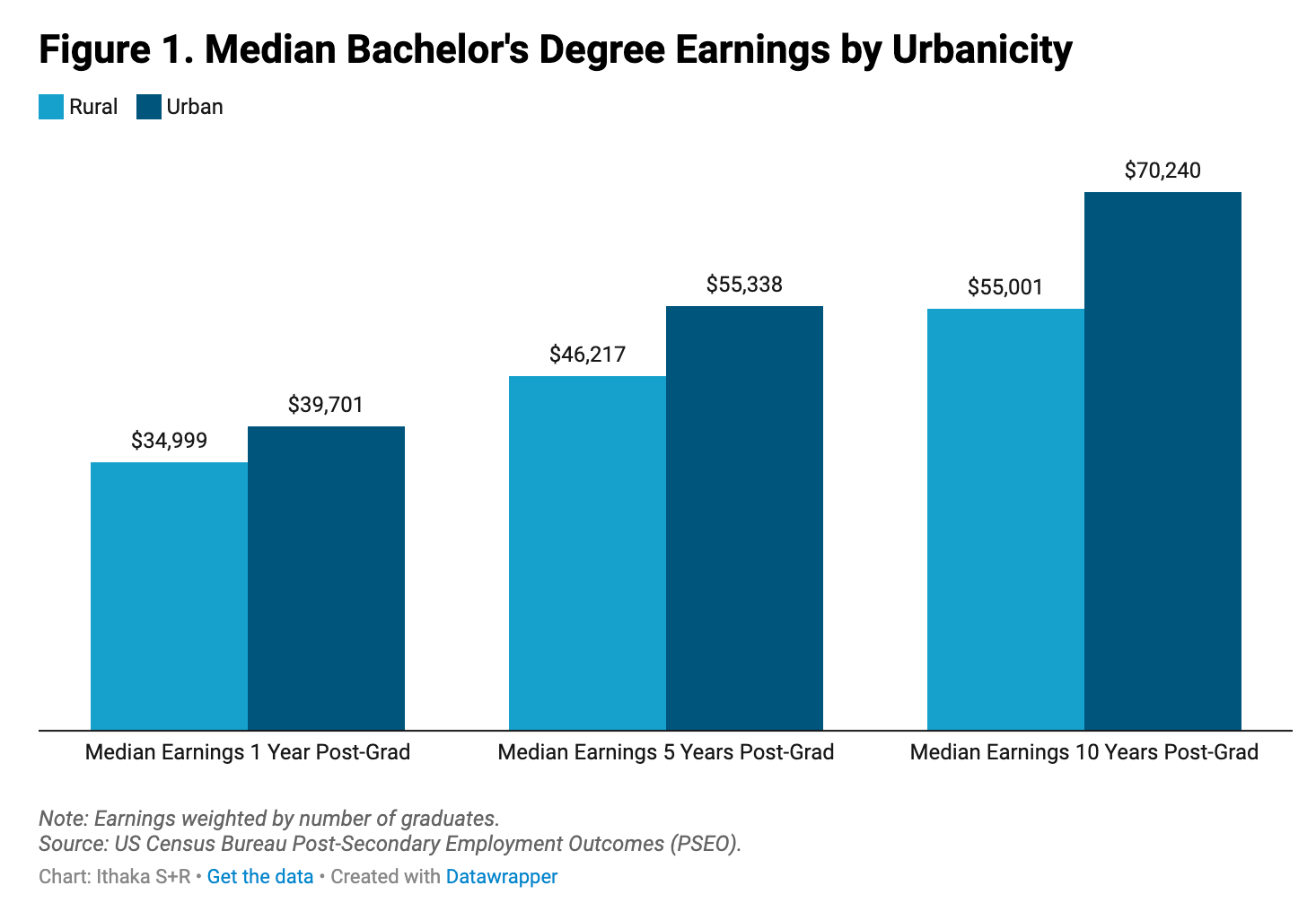

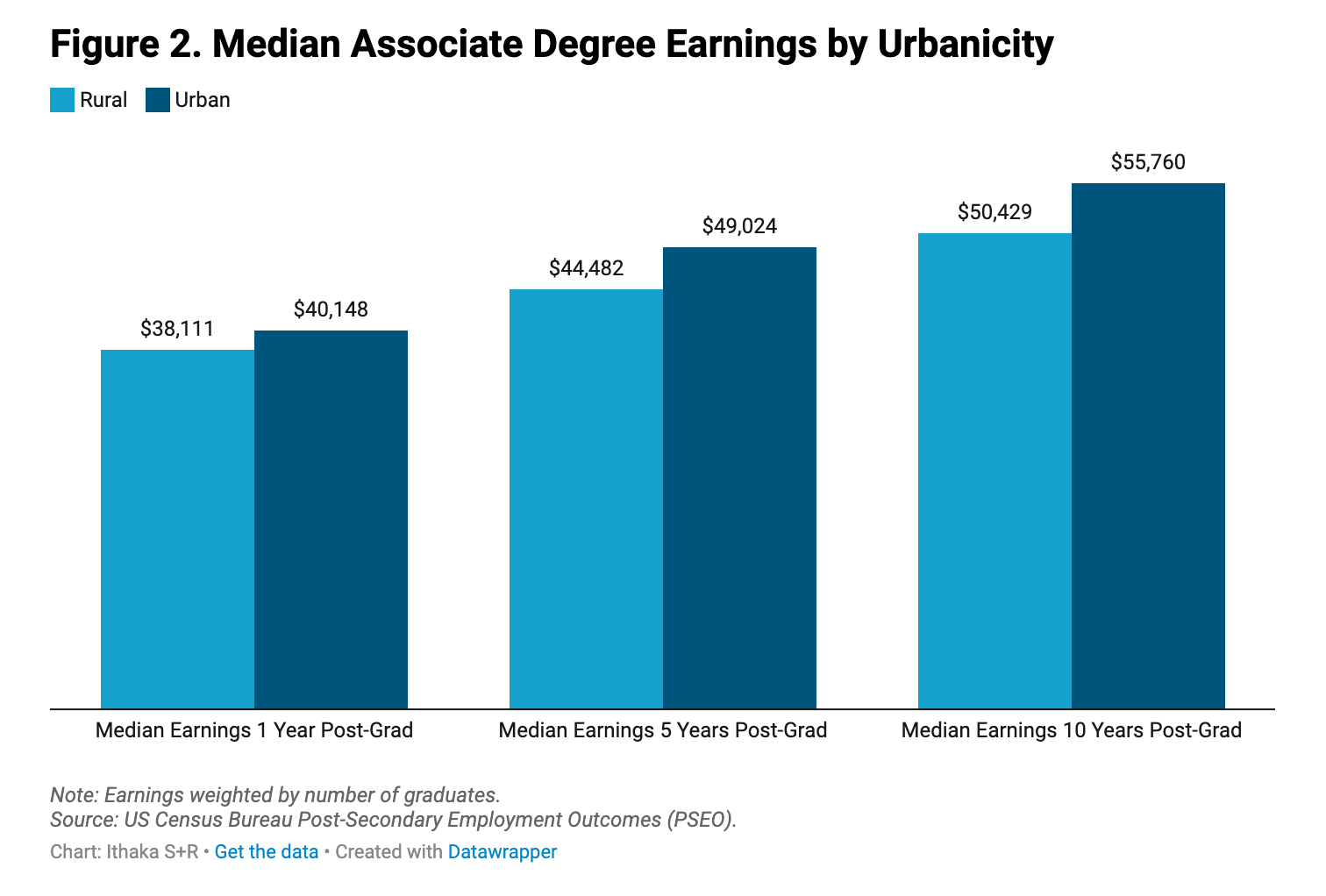

If you take nothing else from the Ithaka reports, it is this: earnings differ significantly between graduates of urban and rural institutions, and those differences grow over time.

Across disciplines, graduates of urban institutions in South Carolina earn more than graduates of rural institutions. Ten years after completing, bachelor’s degree graduates of urban institutions earn approximately $62,200, compared with $55,000 for their peers from rural institutions. The gap is even larger among associate degree holders: 10 years after graduation, urban graduates earn roughly $55,600 and graduates of rural institutions earn $46,900.

It would be a mistake to interpret the lower earnings of graduates from rural institutions as evidence that those institutions or their programs are lower value. What the report makes clear is that these earnings are largely a function of where graduates work. Rural institutions serve rural students, and those students are more likely to remain in rural labor markets where wages are lower but aligned with local economic conditions. In that sense, the outcomes are not surprising—they are consistent with the structure of those labor markets. The problem is not the outcomes, it is the attribution. We are reading system-level wage structures as if they were institutional performance.

2) Earnings dispersion cannot be captured by a single median

Geography is only part of the story. The Ithaka researchers also show that earnings vary dramatically within fields. In some majors, the difference between graduates at the 25th and 75th percentiles can be as high as fivefold. In others, earnings are much more tightly clustered. The report implicitly points to three distinct types of programs with different earnings structures.

Pipeline programs, where outcomes are relatively predictable - Earnings are tightly distributed, and even graduates at the lower end of the distribution tend to earn at or above a living wage. These programs function as pipelines into specific fields—for example, nursing and engineering.

High-variance programs, where there is substantial upside but also significant risk - Top earners do extremely well, but outcomes are highly uneven, with large gaps between those at the top and bottom of the distribution. Biomedical sciences and computer programming are good examples.

Low-floor programs, where the variance is relatively small but earnings are consistently low, often falling below living wage thresholds - Social work, mental health and human services, and parks and recreation fall into this category.

In fields where outcomes are tightly clustered, median earnings are at least a more stable summary. But in high-variance fields, the median obscures more than it reveals. It collapses wide differences in outcomes into a single number. In other words, the same median can describe stability, risk, or inequality.

But the problem is not just that medians are imperfect summaries. It is that they summarize the wrong thing. In many fields, there is no single earnings outcome to summarize. There are multiple trajectories, shaped by how the field structures opportunity, specialization, and advancement. Some graduates access high-paying pathways; others do not. That divergence is not simply a reflection of program quality—it is built into the way the labor market for that field operates. When we reduce that structure to a single median and use it to judge institutions, we are not measuring program effectiveness. We are averaging across fundamentally different futures.

3) Some degrees produce occupations, others produce possibilities

If earnings variation is not noise, then we need to explain where it comes from. The answer lies in the pathways that different programs create. Some degrees lead into relatively well-defined occupational tracks. Others open onto a much wider and less predictable set of possibilities. The difference is structural—and it becomes visible when we shift our focus from earnings levels to where graduates actually go.

The Ithaka analysis shows that post-graduation pathways vary significantly across programs. Some, such as healthcare, have highly concentrated pathways, with most graduates entering the same industry. Others, including business and computer and information sciences, disperse graduates across a wide range of industries.

Concentrated pathways, where a large share of graduates from a program work in the same industry, may indicate strong pipelines into specific industries, while diffuse pathways, where graduates are spread across many industries, may provide greater options for employment after graduation but less upfront clarity about likely employment outcomes for students.

To capture this difference, the researchers construct an Industry Concentration Index for each program, using a standard measure of how evenly items are distributed. Higher values indicate that graduates cluster into a small number of industries, while lower values indicate that they disperse across many.

Table 1: Program dispersion across a range of associates degree programs, Year 1 after graduation

Program | Industry Concentration – Year 1 | Top Industry – Year 1 | Top Industry Share – Year 1 |

|---|---|---|---|

Health Professions and Related Programs | 0.82 | Health Care and Social Assistance | 90% |

Precision Production | 0.46 | Manufacturing | 67% |

Business, Management, Marketing | 0.09 | Retail Trade | 14% |

Computer and Information Sciences and Support Services | 0.09 | Manufacturing | 13% |

Adapted from Industry Concentration and Workforce Pathways in South Carolina

Findings from Postsecondary Employment Outcomes (PSEO) Data

Where pathways are concentrated, earnings are more predictable because they are shaped by a relatively uniform set of industry dynamics. Where pathways are diffuse, earnings reflect multiple, very different compensation structures and career trajectories. In those cases, there is no single “expected” outcome to measure.

What emerges is not a simple story about high- and low-earning programs, but about fundamentally different kinds of pathways. In some fields, graduates move into relatively well-defined industries with more uniform earnings structures, making outcomes more predictable. In many others, graduates scatter across a wide range of industries and roles, where earnings are shaped by very different dynamics. In those cases, there is no single earnings outcome to measure—only a distribution reflecting multiple systems. Treating those outcomes as comparable, and attributing them to institutions, collapses that structure into a single number and mistakes dispersion for performance.

4) Time changes everything

The final layer is time. And here the limitations of earnings-based accountability become even clearer. The Ithaka studies track outcomes over ten years, and across all three dimensions—geography, earnings dispersion, and pathways—one pattern is consistent: these structures do not remain static. They evolve.

The urban–rural gap, for example, grows over time for bachelor’s graduates, suggesting that location-based differences compound as careers progress. By contrast, for associate degree holders, those gaps tend to narrow, reflecting different relationships to local labor markets.

Earnings dispersion also changes over time, but not uniformly. In some fields, gaps between top and bottom earners narrow as careers stabilize. In others, they widen as high earners pull away. What looks like a modest difference early in a career can become a substantial divergence later.

Pathways, too, evolve. Graduates move across industries, specialize, or advance into new roles, meaning that early employment patterns do not fully capture long-term trajectories.

The implication is straightforward but important. Earnings at four or five years post-graduation do not represent a stable endpoint. They capture graduates at an early stage, before many of these dynamics have fully played out. But these dynamics are not predictable because they depend on field, pathway, and labor market structure. Extending the time horizon does not resolve the problem—it simply shifts when we observe it.

What this means: A problem of attribution

The accountability framework rests on a simple logic: earnings reflect institutional quality and program value. The Ithaka reports complicate that picture. They show that graduate earnings are shaped by at least four structural factors.

Geography. Graduates of urban institutions earn more than those of rural institutions. But those differences reflect labor markets as much as institutions. Rural institutions serve rural students, and those students are more likely to remain in rural economies where wages are structurally lower. Earnings reflect location, not simply institutional quality.

Earnings structure. Outcomes vary dramatically within fields. In some programs, earnings are tightly clustered. In others, the spread is enormous, with top earners making several times what those at the lower end earn. That variation is not noise—it reflects how opportunity is structured within the field.

Pathways. Some programs lead into relatively well-defined industries with predictable roles and pay. Others disperse graduates across multiple industries, each with different compensation systems. In those cases, there is no single outcome to measure—only a distribution.

Time. Early-career earnings often obscure longer-term trajectories, particularly for bachelor’s graduates whose earnings premiums emerge over time. But these changes are neither standard nor predictable.

Taken together, these findings point to a deeper issue. Earnings are not solely based on institutional output. They are a joint outcome of labor markets, earnings structure, pathways, and time. When we use earnings to judge institutional quality, we are not just simplifying reality—we are attributing to institutions what is actually produced by systems.

That misattribution has consequences.

It will penalize rural institutions, which already operate in lower-wage labor markets and serve students more likely to remain in them.

It will disadvantage high-variance fields, where outcomes are inherently dispersed and where many graduates contribute to innovation and long-term economic growth.

It will incentivize institutions to narrow their program offerings, particularly by under-investing in low-floor fields that are nonetheless socially important.

Institutions matter, but they operate within systems that shape the outcomes we observe. And because these earnings outcomes are structurally determined, refining the metric will not solve the problem. Adjusting for geography or extending the time horizon may improve precision, but they do not address the underlying issue. We would still be measuring the wrong thing.

Earnings are shaped by systems. Treating them as a direct measure of institutional quality does not just oversimplify reality. It risks distorting it.

This is not an argument against using earnings data. It is an argument against treating them as a single direct measure of institutional quality.

The main On EdTech newsletter is free to share in part or in whole. All we ask is attribution.

Thanks for being a subscriber.